Exhaustive Search

My program for finding 2LBB Busy Beavers can be found on github. This page explains why this search is hard and describes several tricks used by the search program.

Combinatorial explosion

Searching for Busy Beavers is hard because of the combinatorial explosion. There are two problems.

First, the number of possible programs increases exponentially with the size of the grid. For an n×n grid, there are 3n2 possible programs.

Second, the run length also increases. In fact, I expect the maximum run length to increase more than exponentially. Although I have not yet read the proof for the non-computability of Turing-Machine Busy Beavers, I assume the same reasoning can be applied to 2LBB programs as there is no fundamental difference. This would mean that the maximum run length is a non-computable function. At any rate, the time required to evaluate individual programs goes up quickly for increasing grid sizes.

So it is clear that there are limits to the Busy Beavers that can be found. Various optimizations can, however, speed up the search significantly.

Ignore "Don't Care" instructions

Many programs are effectively the same. For a given program the program pointer (PP) only visits a subset of cells on the grid. For the cells it does not visit, it does not matter what instruction they contain. So an important optimization is to not evaluate multiple programs that only differ in these "Don't Care" instructions.

Figure 1 shows these Don't Care instructions for an example program. This program has eleven Don't Care instructions. By ignoring these, for this program alone, the search skips needless evaluation of 311 - 1 programs.

Ignoring Don't Cares can be done by letting the search lazily instantiate instructions: an instruction is instantiated when it is first encountered during program execution. So program execution and search are combined. As Don't Care cells are not visited, they remain unset. The pseudo-code below illustrates the basic idea. It is optimized for clarity, not performance.

// The recursively growing program

Program program

// Execution state: data, Program Pointer, number of steps

ExecutionState execState

function branch():

for ins in [NOOP, DATA, TURN]:

ExecutionState execState0 = execState.copy()

program[execState.pp] = ins

run()

execState = execState0

program[execState.pp] = UNSET

function run():

while true:

ins = program[execState.pp]

switch ins:

case UNSET:

branch()

return

case DONE:

reportDone(program, execState)

return

default:

// Updates PP, Data and numSteps

execState.execute(ins)

Table 1 gives an indication of the speed up that is achieved. The second column lists the total number of possible programs. The third column gives the total number of distinct programs, after ignoring Don't Care instructions. The actual speed up is less than the ratio of both these numbers as the recursion entails some cost. In particular, an undo history needs to be maintained for all operations that modify state, including changes to the data tape. Nevertheless, these extra costs are clearly outweighed by the huge reduction in the number of programs to evaluate.

| Size | #Programs (Total) |

#Programs (Ignoring Don't Cares) |

|---|---|---|

| 3×3 | 2.0 · 104 | 59 |

| 4×4 | 4.3 · 107 | 8.9 · 102 |

| 5×5 | 8.5 · 1011 | 6.0 · 104 |

| 6×6 | 1.5 · 1017 | 1.9 · 107 |

Ignore equivalent DATA permutations

For DATA instructions that are encountered before the first TURN, their exact location does not matter. It only matters how many there are. This follows from the fact that these instructions are only evaluated once, right at the start of the program, and at most once more, just before the program terminates.

For example, Figure 2 shows a program that is effectively the same as the one in Figure 1. The DATA instruction could be moved to any cell underneath the TURN instruction in the first column without impacting the run length of this program (or any other possible program with the same start). Figure 3 and 4 show the only ways in which instructions that are executed before the first TURN can be executed twice. Whether PP passes through such an early DATA instruction for a second time does not impact execution length.

When the first TURN is the second instruction that is executed, PP traverses the bottom row. Here similarly the location of DATA instructions before the second TURN does not matter, only their number. Figure 5 shows an example. Where the second DATA instruction is placed on the bottom row does not impact execution length of any of the possible programs that may follow the second TURN.

Table 2 shows how ignoring these equivalent DATA permutations further reduces the search space. For the grid sizes shown the reduction in size is approximately a factor two, and will go up for larger grid sizes. Although the speed up is relatively small compared to the previous improvement there is no performance penalty associated with it. It is also straightforward to implement so there is no reason not to exploit it.

| Size | #Programs (Only ignoring Don't Cares) |

#Programs (Also ignoring Data Permutations) |

|---|---|---|

| 3×3 | 59 | 31 |

| 4×4 | 8.9 · 102 | 5.2 · 102 |

| 5×5 | 6.0 · 104 | 3.1 · 104 |

| 6×6 | 1.9 · 107 | 8.0 · 106 |

"On the fly" interpretation

In order to speed up execution and to facilitate hang detection, the generated 2L programs are interpreted on the fly. Instead of moving PP one step at a time, the program is converted into Program Blocks.

A Program Block always contains one or more DATA instructions. It ends at the first TURN that follows a DATA instruction. This implies that when the block contains multiple DATA instructions, they all evaluate to the same operation. A block can contain multiple TURNs. These extra TURN instructions then occur before the first DATA instruction.

The interpreted program for the program in Figure 5 is as follows:

0: INC 3 => -/1

1: SHR 1 => 2/3

2: INC 1 => -/4

3: SHL 1 => EXIT

4: DEC 1 => 5/6

5: SHL 1 => 7/4

6: SHR 1 => 5/6

7: DEC 1 => -/3Each line is a program block. From left to right it consists of the following:

- A unique ID

- The operation to carry out

- The next program block to execute. When the data value at DP is zero, the first block should be executed, otherwise the second

Some blocks only have one possible continuation. This is the case when they are entered with a zero value and carry out a INC or DEC operation. In this case the value is always non-zero at the end of the block.

As the program grid is generated on the fly by the search, program blocks can have two states:

- Pending: Its operation and continuation program blocks are still unset. This happens when the program block depends on a program cell that is still unset.

- Finalized: Its operation and continuation program blocks are set.

A block is finalized as soon as its operation is known. Its continuation program blocks are then also set. These may already exist, but if not, will be created. In both cases, their state can be Pending or Finalized.

Hang detection

It is also important to automatically detect hangs. Without doing so the search can only "assume" that a program hangs when its execution exceeded a pre-configured number of steps. Using this cut-off limit is problematic for two reasons. First, it slows down the search. This is especially the case when this limit is high, which it needs to be for bigger grids. Second, it also risks missing the Busy Beaver or a Busy Beaver Champion. This happens when the cut-off limit is lower than the run length of the program.

Detecting all possible hangs is equivalent to solving the Halting Problem, which is known to be impossible. However, as long as a program is "simple enough" it is possible to automatically determine when it hangs.

For more details about detecting hangs see:

Phased search

On 7×7 grids and larger the possible execution length of programs goes up rapidly. To search efficiently, a phased search approach is used. The search configuration varies per phase. There are three phases:

- Hang detection and back-tracking are both enabled,

- Hang detection is disabled, but the search still backtracks, and

- Hang detection and back-tracking are both disabled.

Initially, hang detection is enabled and the search recursively expands the program as it is being evaluated. This is the phase during which the large majority of possible programs is classified. They either terminate relatively quickly or are detected to be hanging.

Hang detection, however, incurs a performance penalty and uses memory to store required run history and data snapshots. This is not effective anymore after a while. Either the program will terminate successfully, in which case hang detection is only slowing down execution. Alternatively the program hangs but then it's unlikely that the hang detection logic detects this, given that it has not yet done so. Therefore, after a pre-configured number of execution steps, the search enters the second phase. In the second phase, hang detection is disabled.

The actual implementation of the search program for rewinding execution state is different than the pseudo-code shown above. It tracks individual data operations and undoes these. This is more efficient early on in the search, when search space branches quickly which involves considerable backtracking. However, it incurs fixed execution overhead per data operation and the required memory grows linearly with the program's execution length. Therefore, after a while this is not effective anymore either. So in the third and final phase, backtracking is disabled as well.

Once backtracking is disabled, that part of the search space cannot be expanded further. Instead, the search switches to very fast execution of the current (interpreted) program. This may result in the following outcomes:

- The program terminates successfully.

- Execution exceeds another pre-configured cut-off limit of execution steps. The program is then assumed to hang.

- During execution the program reaches an instruction that is still unset. This is reported as a late escape. Such a program should be evaluated further at a later stage (see next section).

Staged search

The phases from the previous section switch constantly during a search. However, to efficiently search for 7×7 Busy Beavers, at a higher level, the search is furthermore divided into stages.

The first search stage tries to correctly classify the large majority of 7×7 2LBB programs. The run-length cut-off is one million steps. Within this limit 99.99% of programs are classified as either terminating or hanging. Only for about 0.01% of programs the status is not clear. These may hang or terminate eventually.

The focus of the second stage is therefore on executing these remaining programs as quickly as possible. For that reason, hang detection and back-tracking are permanently disabled. The run-length cut-off is much larger, for example, one billion steps. Three things may happen for each program that is evaluated. First, it terminates which means a long-running program is found. Second, it hits the cut-off limit, which means the program is assumed to hang. Third, an unset instruction is encountered, which is considered a late escape.

The third stage continues the search for each of the late escapes. For each late escape the search only focusses on the part of the search space that contains the programs derived from the late escape. Backtracking is only enabled once execution reaches the still unset instruction, after which the search continues. This search may then find multiple programs that terminate successfully, are known to hang, are assumed to hang but possibly also further late escapes. The latter are then in turn examined by follow-up searches.

Identify Equivalent Programs

Before starting the second stage of the search for long-running 7×7 programs, another optimization is possible. Relatively few programs remain after the first stage (just over a million). Running each program until a relatively high cut-off limit takes significant time. It is therefore worth identifying which remaining programs behave equivalently, and only evaluate one.

Programs are considered equivalent for this purpose when they effectively execute the same interpreted program. For equivalence, three differences are ignored. First, the number of steps an interpreted instruction requires does not matter. This does not impact whether or not a programs hangs or terminates. The number of steps after which equivalent programs terminate may vary, but this can be established later.

Second, where an instruction starts on the 2LBB grid does not matter. As long as interpreted instructions behave the same, they are the same. This for example means that when a 2LBB program has two exits, these are considered the same. For purposes of hang detection, it does not matter which exit is executed.

Third, the order in which the instructions occur in the interpreted program does not matter. The interpreted programs are therefore converted to a canonical representation before they are compared to each other.

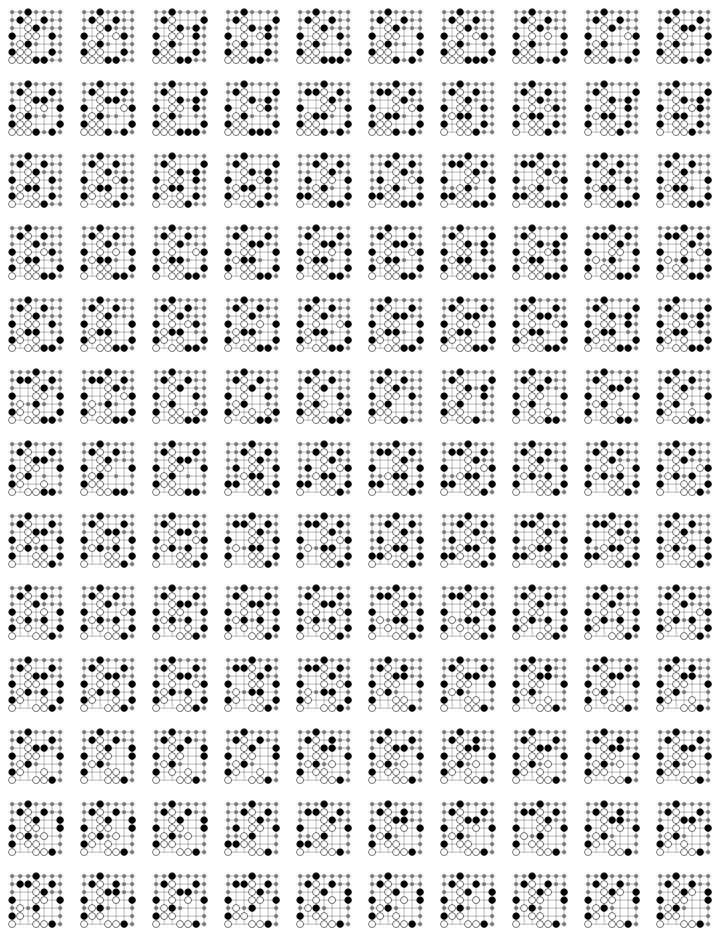

Figure 7 shows 130 different 2LBB programs that are considered equivalent this way. The small grey dots are instructions that are still unset. They have not been visited when executing the program during the Stage 1 search, and therefore are not instantiated. All programs result in the same interpreted program, shown below. The Stage 2 search therefore executes only one instance of this interpreted program to establish if it terminates.

0: INC 1 => -/1

1: SHR 2 => 2/3

2: INC 1 => -/4

3: EXIT

4: SHL 2 => 3/5

5: INC 1 => 6/7

6: ?

7: SHR 1 => 8/9

8: INC 1 => -/10

9: DEC 1 => 11/7

10: SHL 1 => 12/10

11: SHR 1 => 6/4

12: DEC 2 => -/7

There is still one complication that needs to be handled. It follows from the fact that the number of steps for each interpreted instruction may vary. This means that if one program out of the equivalence set is executed and found not to terminate before the cut-off limit, the same does not necessarily apply to all programs in the set. There could still be a program in the set that terminates (or "escapes" to an unset instruction) before the cut-off limit. When this happen, the examined program would behave similarly and do so after executing the same amount of interpreted instructions. However, this apparently corresponds to more steps on the 2D program grid.

In order for the staged search to accurately identify the number of programs that terminate within the cut-off limit and to also be certain that the longest running terminating program within that limit has been found more is needed. The number of execution steps for each instruction in the interpreted program that is evaluated should be the minimum across all programs in the equivalence set. This way it is certain that when this program does not terminate within the limit, none of the programs will.